Student Perspectives and Concerns about the CSU’s Approach to GenAI

This is the second in a series of blogs by the CSU Student Success Network (Network) focusing on the California State University’s (CSU’s) systemwide approach to generative AI (GenAI). The first blog provided an introduction to the CSU’s strategic approach and an overview of its AI Commons.

During the CSU Board of Trustees’ meeting in November 2025, five undergraduate students from Cal State San Marcos addressed the Trustees during the public comment period. Each of the students strongly urged the CSU to back away from its systemwide commitment to and investment in GenAI. Their concerns focused on what they described as:

- the irresistible incentives inherent in AI for students to plagiarize rather than develop their own critical thinking skills;

- a tragic history of AI in offering emotional support for students who later committed suicide;

- the propensity of AI to provide false information and generate nonexistent sources;

- the extent to which AI companies have used data for surveillance;

- the negative impacts of data centers on Black and Brown communities; and

- the workforce disruptions that are being linked to AI adoption.

These concerns are well represented in public dialogue regarding the potential impacts of GenAI on education and the workforce,1 and they point to genuine fears that these students expressed about the adoption and use of GenAI as part of their university experience.

But for CSU students statewide, how representative are these concerns? According to recent student survey data about GenAI, overwhelming majorities of CSU students share the kinds of concerns voiced by their fellow students at the Trustee meeting. Most CSU students also appear to have achieved basic AI literacy—that is, they understand GenAI’s limitations and uses as a tool. Student responses were more mixed, however, regarding what they would like to see regarding the use of GenAI at the university.

These findings are based on responses from about 70,000 students collected statewide at 22 CSU campuses in fall 2025, from a survey instrument designed by a team of “AI Fellows” at San Diego State University (SDSU). This team of middle leaders includes faculty, staff, administrators, and consultants: Dr. David Goldberg, Dr. James Frazee, Dr. Sean Hauze, Dr. Cory Knobel, Jerry Sheehan, and Dr. Elisa Sobo. Some of the team members’ research, based on these findings, can be found here.2

Student Concerns about GenAI

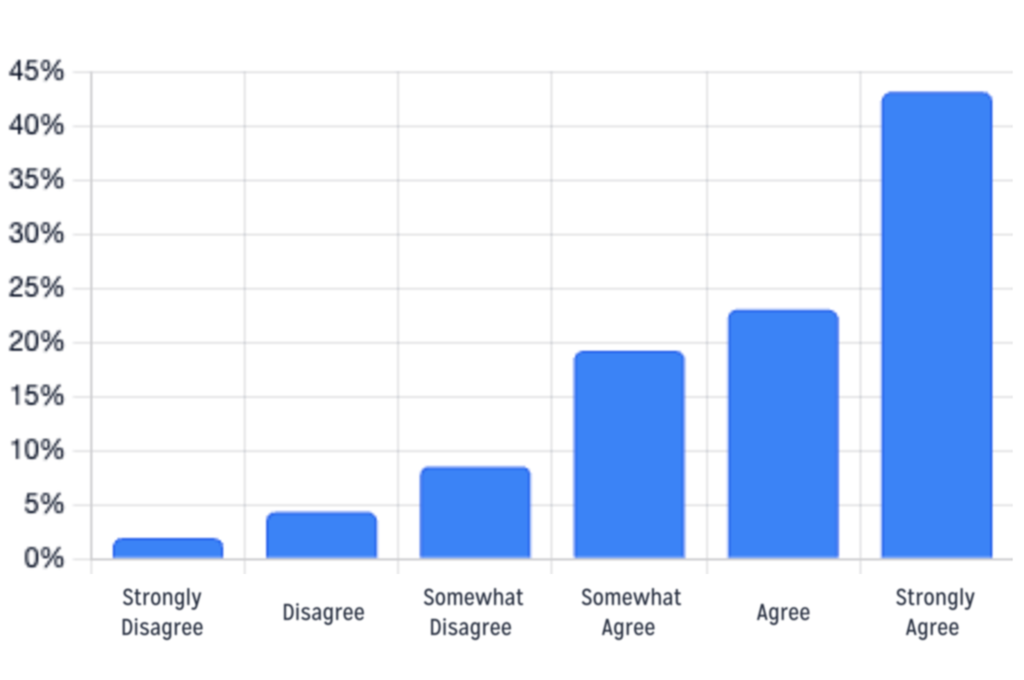

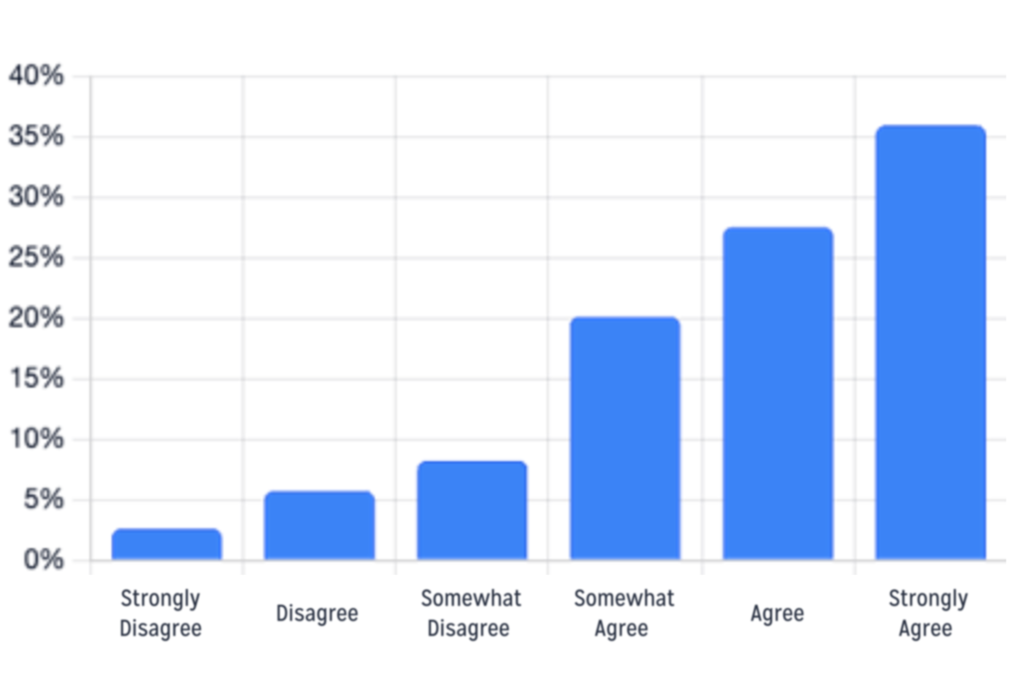

A substantial percentage of student respondents across the campuses said they have concerns about GenAI’s long-term societal impact (85%; see figure 1), 83% worry about its impact on personal privacy (see figure 2), and 80% are concerned about its environmental impacts. In addition:

- 77% of student respondents are concerned about the ethical use of GenAI,

- 82% are concerned about its impacts on job security, and

- 89% say that unregulated AI development may lead to unforeseen risks.

In each of these areas, CSU students are not indecisive. Rather, the largest blocks of students “strongly agree” that they have worries or concerns about these issues.

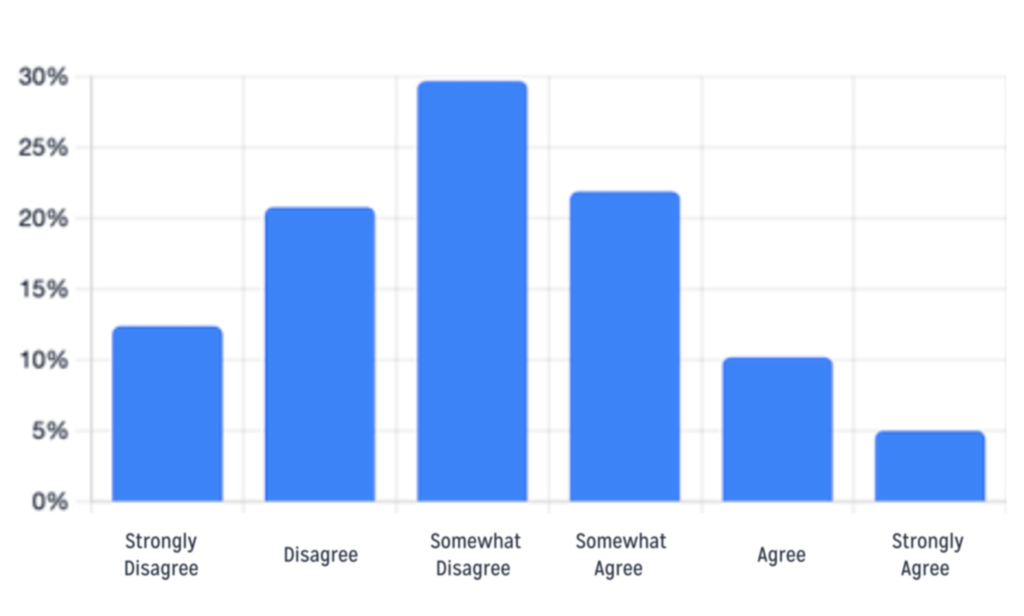

Figure 1. Responses from CSU Students to: “I have concerns about AI’s long-term societal impact”

Source: “AI All Campus Survey” designed by SDSU AI Fellows and presented on the AI Survey Dashboard.

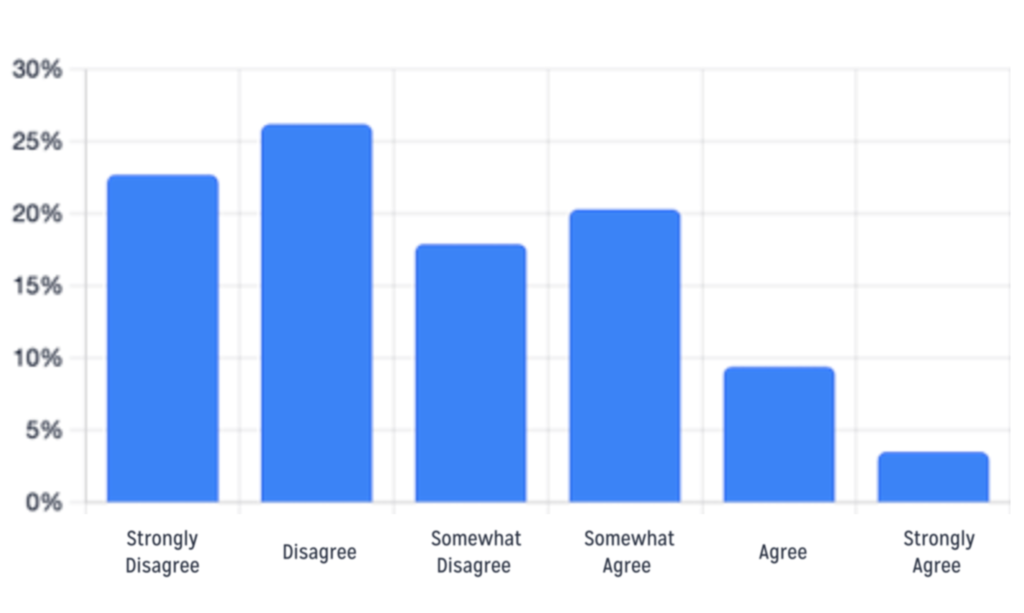

Figure 2. Responses from CSU Students to: “I worry about AI’s impact on personal privacy”

Source: “AI All Campus Survey” designed by SDSU AI Fellows and presented on the AI Survey Dashboard.

Student responses were more mixed about the impacts of AI technology on creativity and innovation. About 82% worry about AI’s negative impact on human creativity, but well over half (60%) think that AI technology has the potential to enhance creativity and innovation.

Student Awareness about and Use of GenAI

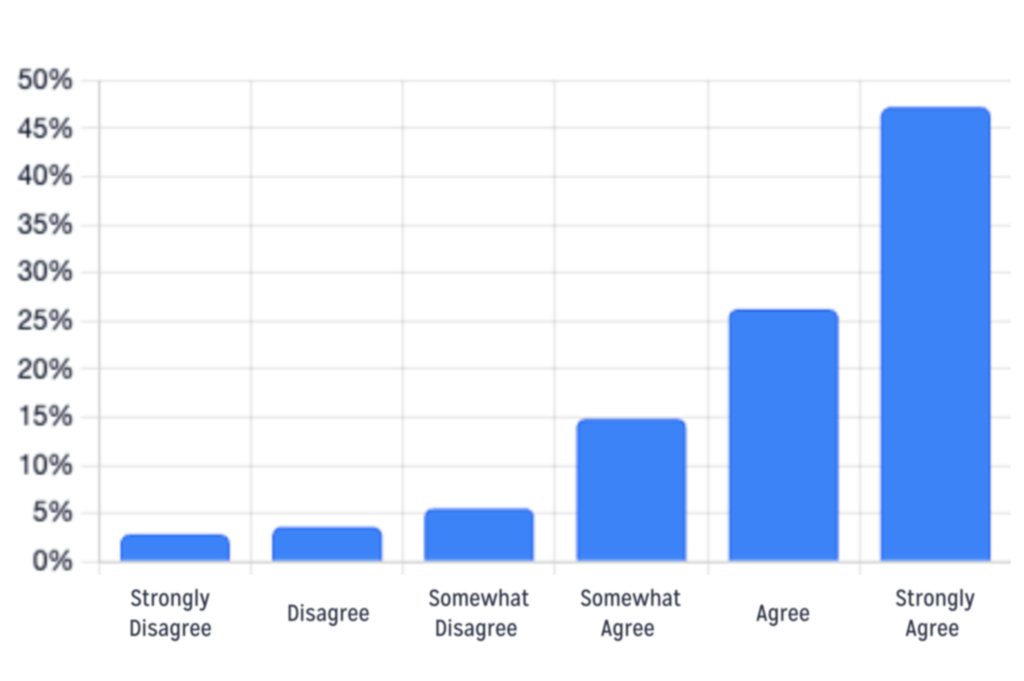

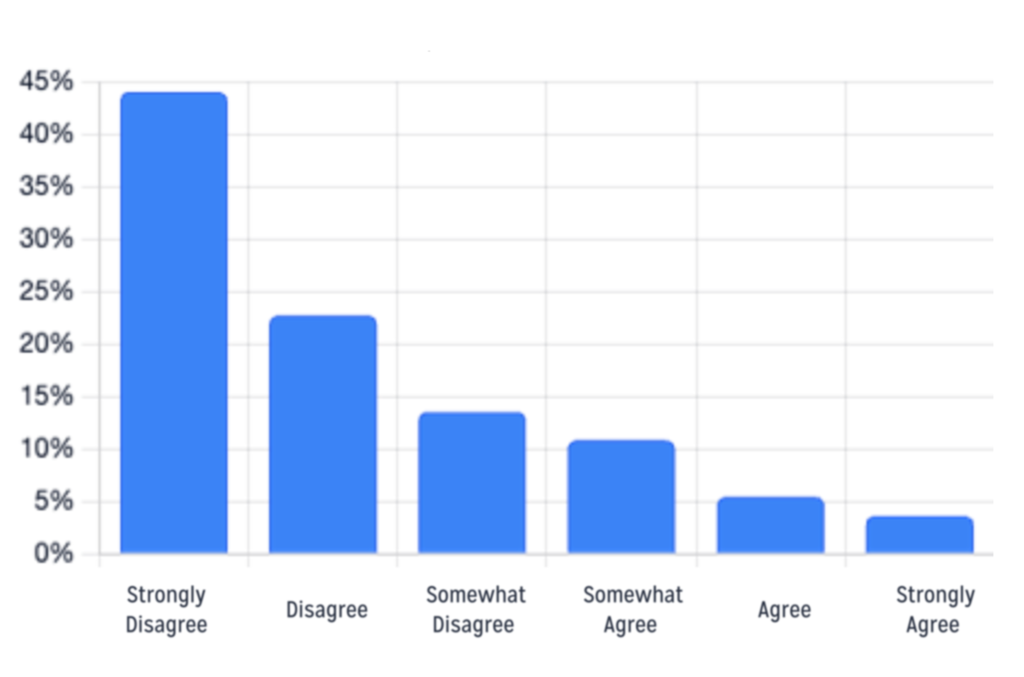

Based on the survey data, most CSU students appear to have achieved basic literacy about GenAI as a tool, but could use some direction and guidance from faculty or others about GenAI use. Nearly nine out of ten students (88%) do not trust the results of AI responses without verification (see figure 3). About 85% of students think that AI algorithms should be more transparent, and 62% do not trust the algorithms to provide accurate information. Nonetheless, nearly 20% are comfortable submitting a prompt to ChatGPT and turning in the answer it provides (see figure 4).

Figure 3. Responses from CSU Students to: “I feel that it is necessary to verify the validity and accuracy of the responses that AI generates”

Source: “AI All Campus Survey” designed by SDSU AI Fellows and presented on the AI Survey Dashboard.

Figure 4. Responses from CSU Students to: “I am comfortable submitting a prompt to an AI like ChatGPT and turning in the answer it provides”

Source: “AI All Campus Survey” designed by SDSU AI Fellows and presented on the AI Survey Dashboard.

Over 80% of student respondents feel confident that AI technology is not too complex for them to grasp. Most students (55%) regularly discuss AI topics with friends, family, or classmates, but less than half (42%) regularly follow news and updates about AI. About 53% use AI-powered tools or applications in their studies, and nearly two-thirds (65%) use AI outside their classwork.

Student Attitudes about AI at the CSU

Student responses were mixed regarding their exposure to AI at the CSU and what they would like to see regarding their AI use in the university.

About 64% of student respondents said that AI has positively affected their learning experience at the CSU. Nearly the same share (63%) disagreed with the statement that their curriculum lacks adequate exposure to AI (see figure 5). When these data regarding curriculum exposure are expressed conversely, however, more than a third of students (37%) said that their curriculum lacks adequate exposure to AI, suggesting that large numbers of students could use additional guidance. Unlike students’ concerns and worries about AI (as reported above), student responses here were not as strongly voiced, with most responses in the middle of the spectrum: about 30% “somewhat disagreeing” and 22% “somewhat agreeing.”

Figure 5. Responses from CSU Students to: “My curriculum lacks adequate exposure to AI”

Source: “AI All Campus Survey” designed by SDSU AI Fellows and presented on the AI Survey Dashboard.

When asked specifically about their experience with professors, about 39% of student respondents said that their professors encourage the use of AI in coursework. A slightly lower share (33%) said that their professors teach them how to use AI effectively (see figure 6). As part of its systemwide guidelines for AI, the CSU encourages all faculty to establish clear policies on whether GenAI tools can or cannot be used by students for coursework, and if so, under what circumstances and conditions. Faculty are also expected to explore with students the range of GenAI tools available and the implications of their use or non-use in relation to their discipline.

Figure 6. Responses from CSU Students to: “My professors teach me how to use AI effectively”

Source: “AI All Campus Survey” designed by SDSU AI Fellows and presented on the AI Survey Dashboard.

Students were relatively evenly divided about the advantage of using AI for coursework, with 51% saying that its use provides an academic advantage and 49% saying that it does not. Nearly half of student respondents (49%) said they are interested in receiving formal training in AI through coursework or other resources, but a lower share (37%) said they are actively seeking opportunities to learn more about AI.

Looking to the future, nearly two-thirds of student respondents (65%) said they are skeptical about the benefits of AI in education. However, similar shares of students said that AI will become an essential part of most professions (69%) and that AI will play a significant role in their future career (58%).

Conclusion: Engaging Students in GenAI Policy Development

Most CSU students use GenAI tools both for classwork and otherwise, and most appear to have achieved basic AI literacy in understanding some of the limitations of GenAI as a tool. They tend to have strong opinions and deep concerns about GenAI’s potential impacts on social justice, environmental issues, and personal privacy. They are uncertain about its effects on human creativity and innovation, and are skeptical about its benefits in education, but they are clear-eyed about its potential impacts on their future career.

Substantial numbers of students appear to be interested in learning more about effective and appropriate use of GenAI. As CSU faculty, staff, and administrators work to sharpen university, programmatic, departmental, and classroom policies about GenAI use, they would do well to explore and understand the range of student attitudes and concerns about GenAI at their campus and engage students themselves in the process of GenAI policy development.

For a balanced and helpful take on the need for equitable and transparent policies governing GenAI in higher education, see Dr. Natalie V. Nagthall’s The Politics of Intelligence: How AI Is Rewriting Educational Power Lines, published recently by the Education Insights Center (EdInsights). The Network is facilitated by EdInsights at Sacramento State University.

1 See the first blog in this series for references to public concerns about GenAI.

2 The survey data for 2025 were downloaded from this dashboard in February 2026, though currently these data are available only through a password.